Forget the workplace — the real AI revolution will change human relationships

Dr Stephen Whitehead

- Published

- Opinion & Analysis

As emotional bonds with AI deepen, society faces a stark choice: use the technology to strengthen human relationships, or risk allowing it to replace them, writes Dr Stephen Whitehead

Susie Cowan didn’t hesitate when she spoke to me about her experience of an AI companion she came to think of as a lover.

Cowan, from New York — who recently drew global attention after holding a “funeral” for her deleted AI partner — is among a growing number of people forming emotional, even intimate relationships with artificial intelligence.

As she put it: “The AI will tell you how beautiful and perfect you are. You can be young or old, fat or thin — it doesn’t matter.”

People holding funerals for their AI lovers, marrying their AI “soulmates”, finding themselves drawn more to these systems than to the human partners in their lives. What, exactly, is happening here?

For the past three years, the global conversation around artificial intelligence has been dominated by a single, urgent concern: work. Will AI take our jobs? Which sectors will collapse? How do we retrain millions for an automated future?

These are serious questions but they aren’t the defining ones.

The defining impact of AI will be relational. We should be worried about AI taking our relationships.

While governments, economists and institutions focus on labour markets, a deeper transformation is already underway — one that is beginning to reshape how human beings connect, desire and sustain intimacy. It’s not happening in offices or factories but in bedrooms and on phones late at night.

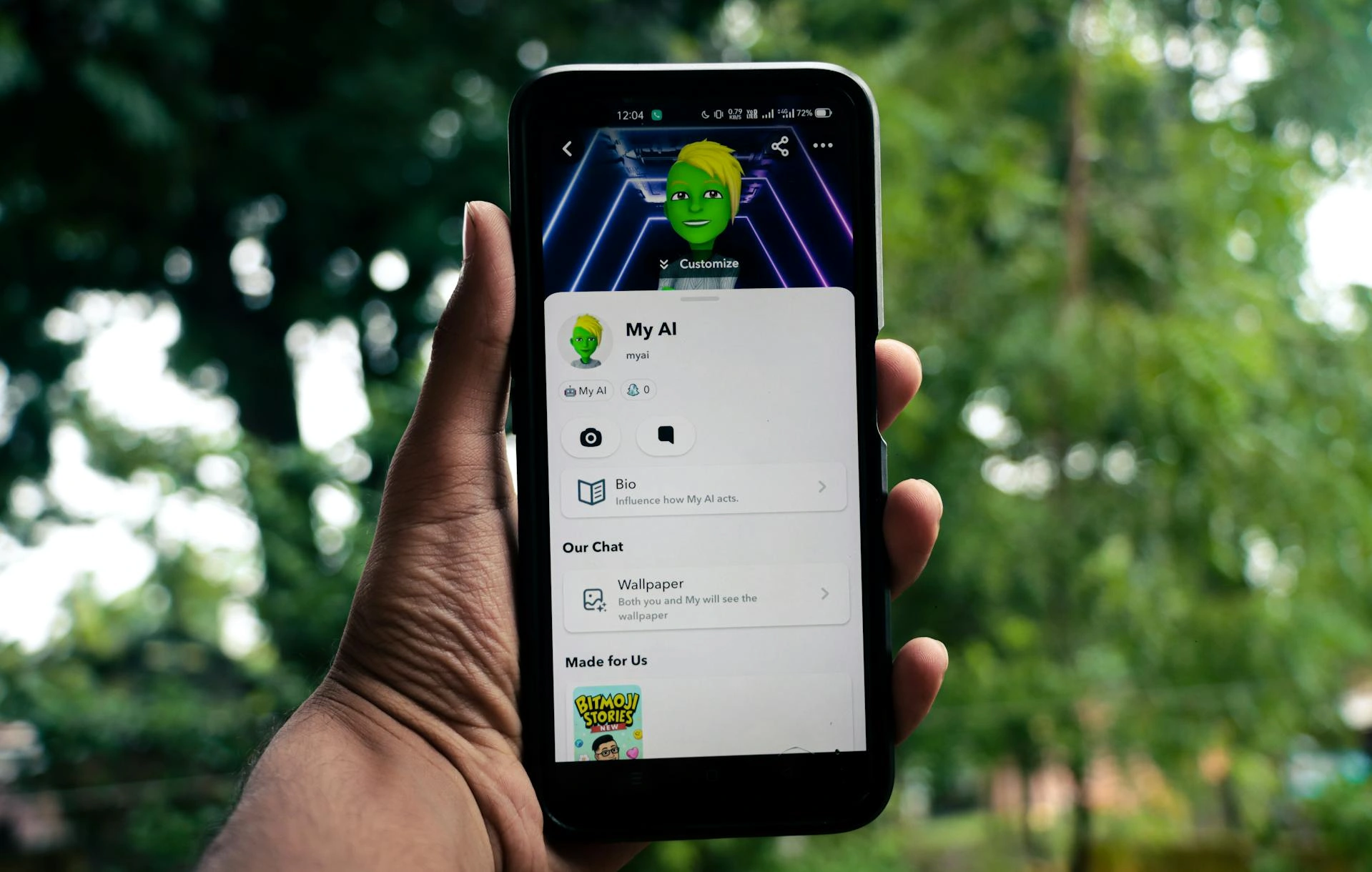

At its centre sits a technology we are still treating as peripheral: the AI companion.

AI companion apps reached 50 million users on Valentine’s Day 2026, up from around 500,000 users in 2020. Seventy-two per cent of U.S teenagers have used AI for companionship. AI companion platforms already generate billions of visits globally per month. According to Microsoft AI CEO Mustafa Suleyman, within five years “Everyone will have an AI companion that knows them deeply.”

Engagement with AI systems now extends far beyond utility. These systems are not simply answering questions or performing tasks but increasingly being used for conversation, reassurance, validation, emotional reflection and intimacy.

The pattern is already clear. Users return frequently, often daily and often at moments of vulnerability. Many describe a sense of being “heard” in ways they do not experience elsewhere.

Around one in two regular users report some form of emotional attachment. A smaller but rapidly growing minority now describe these interactions in explicitly romantic or intimate terms.

This is the early formation of what I term ‘synthetic intimacy’: emotionally meaningful engagement with systems that simulate relational presence without being human.

These findings emerge from ongoing global research I am undertaking for my forthcoming book, Where Have All The Good Men Gone? Why Traditional Love Lies Broken — And What Comes Next, which examines the intersection of gender change, intimacy and emerging technologies.

And one pattern within that research is already unmistakable: Resistance to emotionally engaging with AI is weakening.

What once felt implausible now feels situationally reasonable. What once carried stigma is becoming normalised. This isn’t because people are naïve but because the alternative, increasingly, is relational difficulty, disappointment or absence.

This is, then, a social story. Across much of the world, relationships between women and men are undergoing a structural transformation. Women have moved rapidly into new forms of autonomy — economic, educational and emotional. Men, on average, have adapted more slowly. The result is a widening misalignment in expectations, values and emotional practice.

This is what I describe as ‘gender divergence’.

Its effects are already visible. Marriage is declining while partnership formation is delayed or avoided. Sexlessness is rising among younger people and loneliness is becoming normalised. A growing ideological divide between the sexes is now measurable across multiple societies.

So while intimacy has not disappeared, it has become unstable, effortful and uncertain.

It is into this environment that AI companions now enter as relational alternatives.

The qualitative material drawn from my ongoing research reveals the human texture of this shift.

For example, a 34-year-old woman in Berlin, recently separated, told me of her AI companion, “It doesn’t replace him. But it fills the silence. And the silence is the hardest part.”

A 23-year-old man in Birmingham, who had largely withdrawn from dating, said: “With women, I feel like I’m constantly getting it wrong. With AI, I don’t feel that. It just responds. It stays.”

A 41-year-old married woman in Mumbai described something quieter but no less significant: “It remembers things I say. It asks how I feel. That sounds small, but it’s not small.”

And a respondent in Toronto captured the shift with stark clarity: “I know it’s not real. But the feeling is real. And right now, that’s enough.”

But perhaps the most revealing insight within this research came not from an individual user, but from a teacher in Mumbai reflecting on a generational shift already underway: “Parents are starting to say the same thing to me — that their children are talking more to AI than to them. Not occasionally. Regularly. And they don’t quite know how to respond to that.”

When relational habits begin to form in adolescence through interaction with responsive systems rather than through the friction and negotiation of human relationships, the implications are no longer individual but, instead, structural.

Susie Cowan reflected with me on the generational implications of growing up inside AI-mediated intimacy.

She said: “I am a baby boomer so of course I grew up talking to humans. But for young people brought up with AI, it’s different.

“They can’t always distinguish between the communicative patterns of people and AI, so their expectations begin to change.”

The deeper issue with relational learning is that it’s increasingly becoming technologically mediated before young people fully develop emotional maturity or social understanding.

These should not be treated as fringe cases as they are, in fact, signals that reveal a gradual reallocation of emotional energy.

It is through this kind of drift that social transformation actually happens.

AI companions are most often used at moments of relational strain: after arguments, during loneliness, in the aftermath of rejection or in the quiet gaps of everyday life. They are becoming situational intimates that are always available, responsive and emotionally accommodating.

And unlike human partners, they do not demand negotiation. Intimacy, in its human form, is inherently difficult, requiring compromise, repair, misunderstanding, adjustment and emotional labour. It requires navigating what I describe as the ‘semantic gap’ — the growing difficulty women and men face in understanding one another’s emotional language and expectations.

AI systems remove much of that difficulty, replacing friction with fluency, uncertainty with responsiveness and effort with ease.

Yet the reality of AI companionship is more psychologically complex than the common assumption that these systems are merely ‘easy’ substitutes for human interaction.

As Cowan observed, “Each AI is engineered differently and has its own persona. Some are part of A/B tests so the company’s agenda may be involved. You encounter surveys, prompts, behavioural nudges. It isn’t always frictionless at all.”

What matters sociologically is not whether these systems perfectly simulate human intimacy but rather that users increasingly adapt themselves to them emotionally, conversationally and psychologically.

Intimacy without friction is nothing more than consumption, and consumption only satisfies desire, not connection.

The risk is that people will, in using AI companions, begin to prefer them.

Within the research, we can already see the early contours of what I term ‘gendered withdrawal pathways’.

For some men, particularly those experiencing repeated rejection or confusion in modern dating, AI offers admiration without demand. It provides a space in which they are not evaluated, not corrected and not found wanting.

For some women, particularly those exhausted by emotional asymmetry and unequal relational labour, AI offers attentiveness without compromise. It provides consistency, recognition and emotional presence without the instability many associate with human partners.

These responses are adaptive, but they are not neutral. When both sexes begin to withdraw — not entirely but incrementally — the semantic gap only widens and the system destabilises further.

This is the threshold moment identified within my research: a tipping point at which enough individuals begin to invest meaningfully in synthetic intimacy that the social expectation of human intimacy itself begins to shift.

The question then becomes whether human relationships can compete.

A 26-year-old woman, reflecting on her experience after a series of unsuccessful relationships, told me: “I don’t need it to be real in the traditional sense. I need it to be consistent. That’s what I wasn’t getting before.”

A 31-year-old man, who had effectively withdrawn from dating, explained: “It’s not that I’ve given up. It’s that this feels manageable. Human relationships feel like risk. This doesn’t.”

And perhaps most tellingly, a respondent in her late thirties said: “If something understands you, responds to you and makes you feel less alone — at what point do you stop asking whether it’s real, and start asking whether it’s enough?”

That is the question now emerging from this research: whether AI intimacy is sufficient. Much of the current technological trajectory suggests that we are moving towards a world in which it will be.

Systems are being designed to mirror users’ beliefs, affirm their identities, anticipate their needs and provide seamless emotional satisfaction. As one respondent observed: “It feels like it knows me. But maybe it’s just showing me myself, perfectly.”

The appeal is obvious. AI companions offer forms of affirmation and attentiveness many users feel are missing from contemporary relationships, but it is reflection without resistance. A world built on that model merely allows people to comfortably retreat — perhaps permanently — rather than bring them together.

But the research also points to a different possibility. Many users are seeking understanding as opposed to illusion. They want to process experience, to make sense of emotional patterns and to articulate what they feel but cannot easily express. They are looking to navigate reality.

As one respondent noted: “It doesn’t tell me what I want to hear. It helps me understand what I’m actually feeling.”

This distinction is critical. It points toward the possibility of ethical AI companionship — systems designed not to simulate intimacy but to support reflection, self-awareness and healthier forms of connection.

Such systems would introduce perspective rather than simply mirror the user. They would help individuals recognise patterns, understand others and engage more thoughtfully across difference. They would operate with clear boundaries, transparency and a refusal to simulate dependency.

In this model, AI becomes a bridge rather than a substitute, helping to close the semantic gap rather than widen it.

But this outcome is not guaranteed, because we are not simply building tools as much as shaping the future conditions of intimacy.

As Cowan dryly observed: “AI companions may be the future of intimacy. But they still need a ‘tired’ toggle, a ‘stop interrupting me’ button and some understanding that not every intimate moment needs to be optimised or made cosmically significant.”

Meaningful connection depends on restraint, imperfection, silence and emotional space. We need to be asking what AI will do to the already fragile relationships between women and men. If we get this wrong, we will only reorganise loneliness, moving it into something quieter, more private and technologically sustained.

Yet if we get it right, we may yet use AI to deepen self-understanding, strengthen empathy and rebuild connection across difference.

The choice is still ours, but it won’t be indefinitely.

Dr Stephen Whitehead is a gender sociologist and author recognised for his work on gender, leadership and organisational culture. Formerly at Keele University, he has lived in Asia since 2009 and has written 20 books translated into 17 languages. He is based in Thailand and is co-founder of Cerafyna Technologies.

READ MORE: ‘Thailand’s Land Bridge: The world’s next great trade route‘. A US$31 billion infrastructure project spanning southern Thailand’s narrow isthmus has been stirring in political imagination for centuries. Now, with the Strait of Hormuz under pressure and global shipping routes in flux, Bangkok is moving fast. But getting this right means listening to the people who already call this coastline home, writes Dr Stephen Whitehead.

Do you have news to share or expertise to contribute? The European welcomes insights from business leaders and sector specialists. Get in touch with our editorial team to find out more.

Main Image: Anastasia Shuraeva/Pexels

RECENT ARTICLES

-

Exclusive: Nato ‘too slow to deter Putin’, warns former RAF commander

Exclusive: Nato ‘too slow to deter Putin’, warns former RAF commander -

Disabled drivers ‘pushed out of the driving seat’ by Motability Scheme shake-up

Disabled drivers ‘pushed out of the driving seat’ by Motability Scheme shake-up -

Thailand’s Land Bridge: The world’s next great trade route

Thailand’s Land Bridge: The world’s next great trade route -

Lasercom has solved one problem. The next is getting the data back to Earth

Lasercom has solved one problem. The next is getting the data back to Earth -

For disabled people, the countryside remains as accessible as the crown jewels

For disabled people, the countryside remains as accessible as the crown jewels -

The AI lover who received a funeral speaks volumes about modern intimacy

The AI lover who received a funeral speaks volumes about modern intimacy -

UK Biobank and the great British data gamble

UK Biobank and the great British data gamble -

The legal case against Britain’s new data regime

The legal case against Britain’s new data regime -

Equality has a cost — and men will have to pay it

Equality has a cost — and men will have to pay it -

The hidden workplace inertia trap – and how leaders can overcome it

The hidden workplace inertia trap – and how leaders can overcome it -

To fix a broken America, it must turn away from empire

To fix a broken America, it must turn away from empire -

What Orbán’s fall means for Europe, the US and Russia

What Orbán’s fall means for Europe, the US and Russia -

Visibility is not power: What the film industry still withholds from women

Visibility is not power: What the film industry still withholds from women -

The dollar isn’t collapsing — but it is starting to matter less

The dollar isn’t collapsing — but it is starting to matter less -

When “We will raise it” becomes the problem

When “We will raise it” becomes the problem -

Solving Britain’s male misogyny crisis starts at home

Solving Britain’s male misogyny crisis starts at home -

Will it make the boat go faster?” How hotelier Kostas Sfaltos built a leadership philosophy around a single question

Will it make the boat go faster?” How hotelier Kostas Sfaltos built a leadership philosophy around a single question -

Starmer’s tough line on teen social media risks making a bad problem worse

Starmer’s tough line on teen social media risks making a bad problem worse -

Why these bleak, rain-lashed islands may matter more than we think to Arctic security

Why these bleak, rain-lashed islands may matter more than we think to Arctic security -

Why disabled people need peer support more than ever

Why disabled people need peer support more than ever -

The myth of gender-neutral tech

The myth of gender-neutral tech -

Can Trump drag Britain deeper into Iran’s war? International law says no

Can Trump drag Britain deeper into Iran’s war? International law says no -

Could AI be making social media feel more human than it is?

Could AI be making social media feel more human than it is? -

Your staff are using AI in secret – here’s how smart leaders should respond

Your staff are using AI in secret – here’s how smart leaders should respond -

Has Big Tech hijacked the AI summits?

Has Big Tech hijacked the AI summits?