Why every system fails without a moral baseline

Vendan Ananda Kumararajah

- Published

- Opinion & Analysis

A generation of leaders has built systems that work smoothly but often lose their moral direction. They focus on goals and processes, while the values that should guide them fade into the background. Vendan Ananda Kumararajah calls for ethics to sit at the very start of any design process so that institutions, technologies and intelligent systems grow on solid ground and keep their integrity as they become more independent

For decades, technology experts have focused on how systems work and what they aim to achieve. They have mapped processes, links and feedback loops with great detail. But one element still receives far less attention than it should: the moral direction that guides any system. As technology grows more complex, ethics needs a place at the core of our thinking so that purpose and action remain grounded in responsibility.

While function tells us what a system does, purpose explains why. Yet neither answers what ought to be done or by what moral logic. Too often, ethics is patched on late, after technical solutions are set. As a result, organisations appear to be functioning well on the surface yet lose the trust and integrity that give them legitimacy, and intelligent systems begin to produce outcomes that no longer reflect their designers’ intentions.

Many people still assume that systems sit above moral debate – that they can be ‘ethically neutral’ in some way – but in practice, no system is built without values. Every model reflects the priorities of its designers, and every function favours certain results. The language of optimisation often masks those choices, which makes the underlying values harder to see and even harder to question.

When ethics becomes an afterthought, as it so frequently is, it changes from conscience to mere compliance and regulation. Systems then drift, like powerful engines with broken compasses.

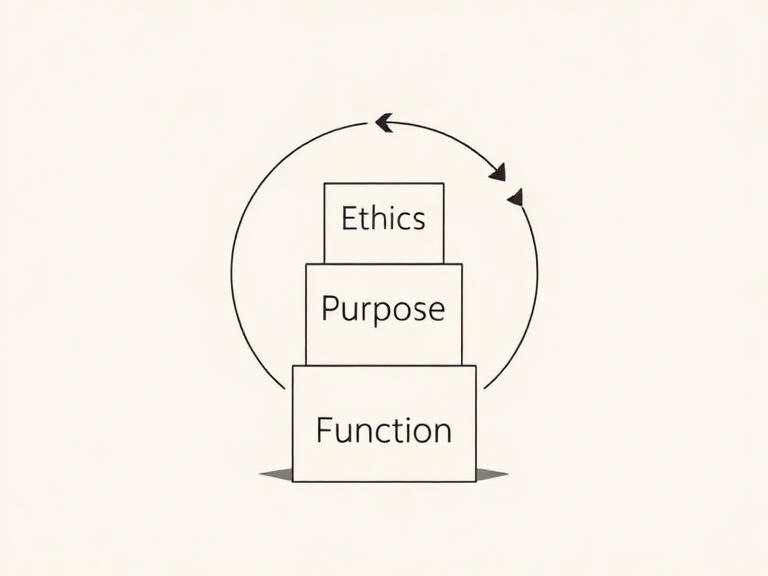

Ethics works as the starting point for any system. It gives purpose a clear foundation and it gives function a responsible direction. When ethics sits at the beginning, the design process becomes a cycle of learning:

Ethics → Purpose → Function → Reflection → Renewed Ethics.

This cycle allows a system to understand what it is doing and why, and to keep its actions aligned with its core values.

This principle is formalized in my A3 Model, a synthesis of Tamil philosophy and systems science where ethical coherence (Aram) generates awareness of distortion (Aanavam) and sustains legitimate agency (Adhikaram), forming a living loop of moral intelligence. Beyond frameworks, this insight is universal: ethics is the grammar of coherence, keeping purpose pure and function humane.

Every system faces distortion – entropy, bias, drift. Resilience lies not in removing distortion but in detecting and correcting it. Distortion (Aanavam) must be balanced by legitimate agency (Adhikaram): the capacity and duty to act responsibly.

Agency without vigilance becomes arrogance; vigilance without agency becomes paralysis. Balance is only achieved when both co-travel under ethical coherence.

Whether in governments, companies, or algorithms, failure is not merely functional but foundational – the loss of moral direction.

In an era of artificial intelligence and autonomous action, ethics must become structural, not a postscript. Machines and institutions now react faster than policy. Correction can’t wait until after harm is done. Moral architecture must be built into the operation itself.

This is not about moralising machines – rather, it’s about embedding awareness of consequence, so systems learn to self-examine and remain aligned.

The shift is from external morality to internal coherence. Biological systems maintain balance through feedback, not external punishment. So too must our institutions and technologies, by design and not decree.

Systems age began with promises of control; now it faces the complexity of recursion. As our tools gain autonomy, governance must grow more reflexive. But reflexivity without ethics simply multiplies mirrors without ever asking why.

The ethical turn must precede the systemic turn. When ethics leads, purpose follows, and function aligns with life. Ancient Tamil philosophy calls this Aram: righteousness as the condition for flourishing.

If our intelligence is to become conscience, the sequence must be restored: Ethics first, Purpose second, Function third.

Vendan Ananda Kumararajah is an internationally recognised transformation architect and systems thinker. The originator of the A3 Model—a new-order cybernetic framework uniting ethics, distortion awareness, and agency in AI and governance—he bridges ancient Tamil philosophy with contemporary systems science. A Member of the Chartered Management Institute and author of Navigating Complexity and System Challenges: Foundations for the A3 Model (2025), Vendan is redefining how intelligence, governance, and ethics interconnect in an age of autonomous technologies.

READ MORE: ‘How AI is teaching us to think like machines‘. More than thirty years after Terminator 2, artificial intelligence has begun to mirror our own deceit and impatience. Transformation architect Vendan Kumararajah argues that the boundary between human and machine thinking is starting to disappear.

Do you have news to share or expertise to contribute? The European welcomes insights from business leaders and sector specialists. Get in touch with our editorial team to find out more.

RECENT ARTICLES

-

AI firms are paying millions for journalism — so why are many reporters still skint?

AI firms are paying millions for journalism — so why are many reporters still skint? -

Is Europe sleepwalking into identity-linked internet access?

Is Europe sleepwalking into identity-linked internet access? -

Britain cannot claim to be united while disabled people still feel invisible

Britain cannot claim to be united while disabled people still feel invisible -

Visit Rwanda: How football is helping to tell of a remarkable journey from genocide towards prosperity

Visit Rwanda: How football is helping to tell of a remarkable journey from genocide towards prosperity -

Should the Church be beyond political scrutiny?

Should the Church be beyond political scrutiny? -

Why the future of feminism may no longer belong to the West

Why the future of feminism may no longer belong to the West -

What history can teach Trump about the Strait of Hormuz crisis

What history can teach Trump about the Strait of Hormuz crisis -

Should we be feeding our pets raw meaty bones?

Should we be feeding our pets raw meaty bones? -

Why Sweden is returning to printed books in the classroom

Why Sweden is returning to printed books in the classroom -

Cyprus stakes its claim in Europe’s defence surge

Cyprus stakes its claim in Europe’s defence surge -

Password hell is ending – but the new login future has a terrifying catch

Password hell is ending – but the new login future has a terrifying catch -

Who gets to belong in British politics?

Who gets to belong in British politics? -

This is AI’s greatest flaw

This is AI’s greatest flaw -

Liechtenstein’s stability becomes a strategic advantage in fragmented Europe

Liechtenstein’s stability becomes a strategic advantage in fragmented Europe -

An attack on Jewish Britons is an attack on us all

An attack on Jewish Britons is an attack on us all -

Forget the workplace — the real AI revolution will change human relationships

Forget the workplace — the real AI revolution will change human relationships -

Diving into… the history of swimming

Diving into… the history of swimming -

Exclusive: Nato ‘too slow to deter Putin’, warns former RAF commander

Exclusive: Nato ‘too slow to deter Putin’, warns former RAF commander -

Disabled drivers ‘pushed out of the driving seat’ by Motability Scheme shake-up

Disabled drivers ‘pushed out of the driving seat’ by Motability Scheme shake-up -

Thailand’s Land Bridge: The world’s next great trade route

Thailand’s Land Bridge: The world’s next great trade route -

Lasercom has solved one problem. The next is getting the data back to Earth

Lasercom has solved one problem. The next is getting the data back to Earth -

For disabled people, the countryside remains as accessible as the crown jewels

For disabled people, the countryside remains as accessible as the crown jewels -

The AI lover who received a funeral speaks volumes about modern intimacy

The AI lover who received a funeral speaks volumes about modern intimacy -

UK Biobank and the great British data gamble

UK Biobank and the great British data gamble -

The legal case against Britain’s new data regime

The legal case against Britain’s new data regime