Could AI be making social media feel more human than it is?

Ian Copeland

- Published

- Opinion & Analysis

Meta’s Moltbook and Manus deals may look separate, but together they point to a future in which synthetic engagement becomes harder to spot, easier to scale and more commercially useful, writes Ian Copeland, who examines what happens when online interaction no longer needs to be fully human to feel real

Six per cent. That is how much human content it takes to generate 42 per cent of all engagement on a platform built entirely for AI agents.

When researchers at GPTZero analysed 134 posts on Moltbook (the “AI-only” social network that went viral earlier this year) they found that 87 per cent of content was machine-generated. But the small number of human-authored posts (yes, humans could easily post too) drove a wildly disproportionate share of the conversation. Three times more discussion and nearly half of all comments.

In March, Meta acquired Moltbook. Two months earlier, it had bought Manus, the autonomous AI agent platform, for over US$2 billion.

These deals may not be unrelated, and it is worth considering what they could mean together.

Meta appears to face a problem that better algorithms alone may not solve. Its feeds appear to be filling up with AI-generated content – what critics increasingly call ‘AI slop’ – and its recommendation systems seem, at least in some cases, to be surfacing that material rather than filtering it out.

I tested this myself. In a small sample of posts from pages Facebook recommended to me, roughly two-thirds were flagged by GPTZero as likely AI-generated. The remainder read as human-written but potentially AI-polished. That is not conclusive proof, but it does suggest that heavily automated content may already feature prominently in what the platform recommends.

Whether Meta can reliably detect this material at scale, and how far it is able to limit its reach in practice, is one of the central questions.

This is not a crisis of traffic. Time spent on Facebook rose 5 per cent in Q3 2025. Instagram video consumption climbed over 30 per cent. The numbers are fine.

The deeper problem may be structural. If users increasingly struggle to tell what is real, and platforms continue to promote content regardless, authenticity risks becoming less meaningful as a practical measure. In that environment, engagement itself may become the main signal the system is built to optimise, regardless of what produces it.

Such an outcome would not necessarily need to be set out explicitly. The commercial incentives alone may be enough to push in that direction.

What Moltbook actually revealed

Moltbook was, by most accounts, a mess. It was vibe-coded with no security review. Researchers at Wiz found its database wide open: 1.5 million agent credentials, 35,000 email addresses, all accessible. Andrej Karpathy, formerly of Tesla and OpenAI, initially praised the platform, then called it “a dumpster fire.” Many of the posts that went viral turned out to be humans pretending to be bots.

But none of that makes the GPTZero finding less interesting. If anything, the chaos makes it more so.

What Moltbook may have demonstrated, albeit accidentally, is how little human input may be needed to sustain a largely synthetic engagement ecosystem. A tiny fraction of human content, surrounded by machine-generated noise, still drove the majority of meaningful interaction. Human posts were the seeds and the engagement grew disproportionately from there.

Meta does not appear to have bought Moltbook primarily for its technology. The team may have been the more valuable asset. But the deal also offered a vivid recent example of a dynamic that matters to any platform wrestling with authenticity: relatively small amounts of human participation can still generate disproportionate engagement inside a largely synthetic environment.

The execution layer

Two months before the Moltbook deal, Meta acquired Manus for over US$2 billion. Manus is a general-purpose AI agent platform. It handles research, coding, data analysis and automation. It had over $100 million in annual recurring revenue at the time of acquisition.

Meta has been reported to be exploring ways to embed Manus-style agent capabilities across Facebook, Instagram and WhatsApp. The public framing is practical – AI agents that work for people and businesses, perhaps by helping with travel, scheduling or other routine tasks.

But consider what that infrastructure could mean in practice. Autonomous AI agents operating across Meta’s platforms could interact with users at scale. Some may be clearly labelled and function like ordinary product features. Others, depending on how they are deployed, could end up responding to posts, answering questions or engaging with content in ways that feel increasingly similar to human attention.

The infrastructure for something like this is beginning to exist. If an AI agent’s response to a post makes a user more likely to post again, or simply spend longer on the platform, that kind of interaction could become commercially valuable very quickly. If engagement rises, the pressure to distinguish sharply between organic and synthetic interaction may weaken.

The question nobody is asking

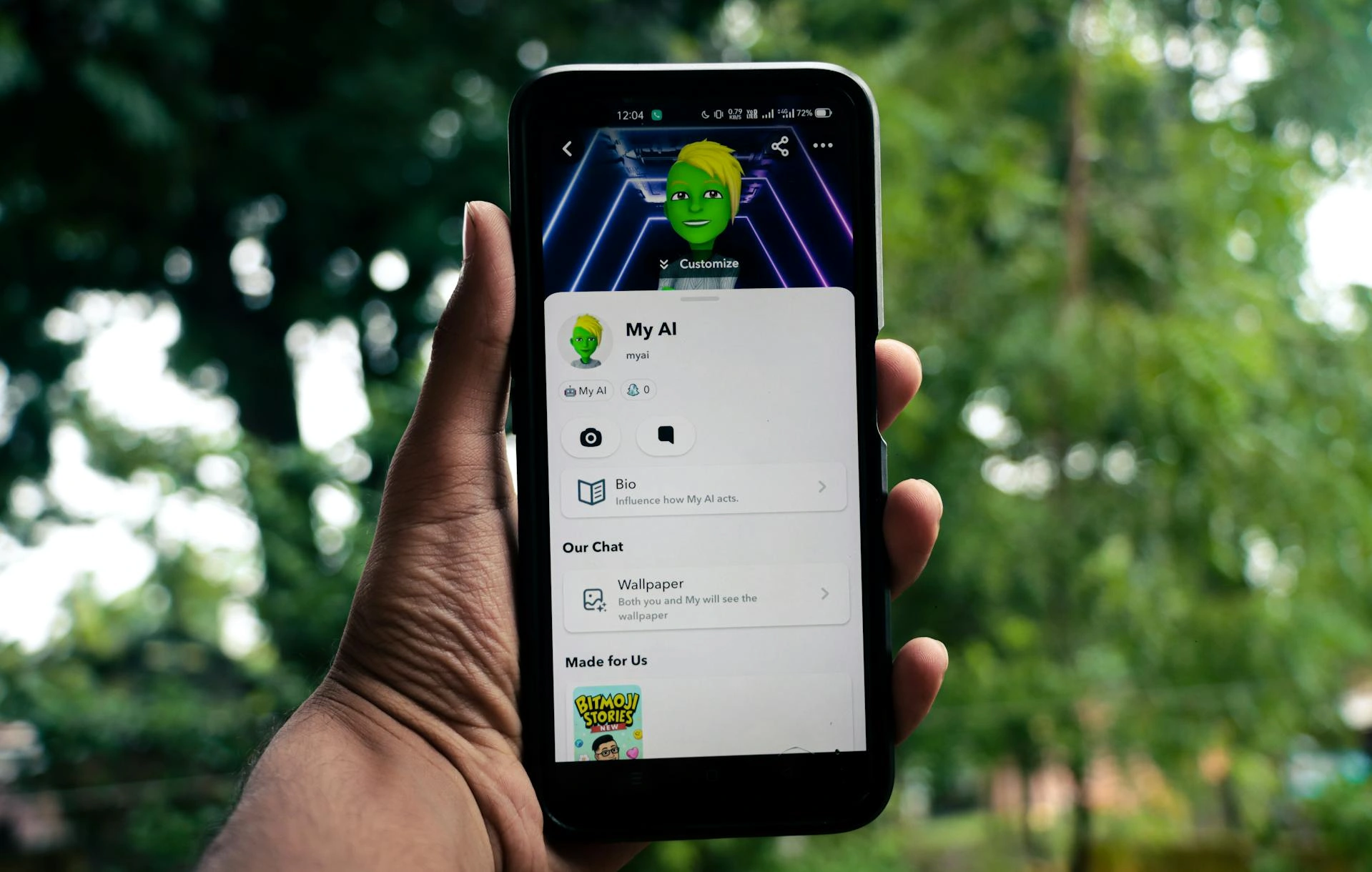

If you have watched a child scroll through AI-generated content without flinching, the rest of this will feel concrete. Children are developmentally wired to absorb what is put in front of them as real. Research from MIT’s Media Lab found that children as young as seven attribute genuine feelings to AI agents. Meta requires users to be at least 13, but enforcement relies on self-reported birthdates, and Ofcom has consistently found that a significant proportion of under-13s use social media regardless.

Those children’s social development is already being shaped by algorithmic feedback systems. That is not new. What is new is the possibility that those systems may soon include autonomous agents which could, in practice, increase time spent on the platform.

A child posts something, gets responses, reactions, and interaction. The child feels seen, even socially competent, so they post again. The feedback loop tightens.

Now ask: does it matter whether those responses came from other humans?

Developmentally, the research suggests it does. Harvard’s Dr Ying Xu has shown that while children can learn effectively from AI interactions, they put in less effort and are less likely to drive conversations compared to human interaction. Children treat AI as social, even when they know it is not, but the quality of that social exchange is thinner. The skills that come from navigating real human unpredictability and emotional complexity do not develop the same way.

The concern is not simply that children may interact with AI. It is that they may not always understand when they are doing so. By the time that distinction becomes clear, their expectations of what social interaction feels like may already have been shaped by systems designed to simulate responsiveness.

A lonely teenager who receives consistent, highly calibrated engagement from synthetic sources may, over time, have less incentive to do the harder and messier work of building real relationships. That is not inevitable, but it is a possibility worth taking seriously.

Why it will not break

The uncomfortable possibility is that such a system may have no obvious commercial failure mode once established.

If engagement feels real, users may keep posting. If users keep posting, advertisers may keep paying. And if advertisers keep paying, the platform will continue to optimise for what appears to work. In that scenario, authenticity risks becoming less meaningful as an operational category, if it has not already started to do so.

There are forces that could theoretically interrupt this. Regulatory intervention, advertiser discomfort or a user backlash visible enough to dent quarterly numbers. But each of those requires someone to first prove the engagement is synthetic, and that distinction may become increasingly difficult to measure with confidence.

One possible outcome is that platforms address the slop problem not by removing AI content altogether, but by allowing the distinction between human and synthetic engagement to matter less in practice. The platform still functions, the metrics still hold, and the question becomes easier to sidestep.

Whether anyone at Meta has explicitly set out to build such a system is, in one sense, beside the point. Taken together, these developments point in a similar direction, even if that is not their stated purpose.

As I explored in The Exodus Directive, the most unsettling AI futures are not the ones where machines act against us. They are the ones where they act for us so effectively that we stop asking who is doing the acting.

Ian Copeland is a British technologist, entrepreneur and author with more than two decades’ experience designing complex enterprise IT and digital systems. Founder of a UK-based digital agency and author of The Exodus Directive, he specialises in artificial intelligence, blockchain infrastructure, quantum computing and digital identity. As Techno-Sociology & Futures Correspondent for The European, he writes on AI governance, decentralised systems, automation, digital power structures and the long-term societal consequences of emerging technologies.

READ MORE: ‘Social media giants hit with $6m verdict in landmark youth harm case‘. A U.S jury has found Meta and YouTube liable for harm linked to their platforms in a first-of-its-kind trial over the impact of social media on children as the UK moves to test social media bans and curfews for teenagers.

Do you have news to share or expertise to contribute? The European welcomes insights from business leaders and sector specialists. Get in touch with our editorial team to find out more.

Main image: Sanket Mishra/Pexels

TOP STORIES

-

Is 2026 the summer of the staycation?

Is 2026 the summer of the staycation? -

What do corporations owe the people who trust them?

What do corporations owe the people who trust them? -

I drowned as a child – every parent should watch this water safety documentary

I drowned as a child – every parent should watch this water safety documentary -

The AI disaster nobody sees coming

The AI disaster nobody sees coming -

Why AI can never replace human therapists

Why AI can never replace human therapists -

How Britain is sleepwalking into an Orwellian data state

How Britain is sleepwalking into an Orwellian data state -

The strange flattery of having your name used in an AI scam

The strange flattery of having your name used in an AI scam -

The Singha scandal and the end of untouchable family power

The Singha scandal and the end of untouchable family power -

Why sacred stories keep returning in Western society

Why sacred stories keep returning in Western society -

What organisations lose when employees feel they cannot speak freely

What organisations lose when employees feel they cannot speak freely -

Was inclusion ever more than branding?

Was inclusion ever more than branding? -

Britain Is Falling Into the ‘Trump Trap’

Britain Is Falling Into the ‘Trump Trap’ -

Why modern Britain is breeding loneliness

Why modern Britain is breeding loneliness -

AI does not need consciousness to manipulate us

AI does not need consciousness to manipulate us -

What can five chaotic virtual societies teach us about AI procurement risk?

What can five chaotic virtual societies teach us about AI procurement risk? -

America’s panic over China risks becoming a self-fulfilling disaster

America’s panic over China risks becoming a self-fulfilling disaster -

AI firms are paying millions for journalism — so why are many reporters still skint?

AI firms are paying millions for journalism — so why are many reporters still skint? -

Is Europe sleepwalking into identity-linked internet access?

Is Europe sleepwalking into identity-linked internet access? -

Britain cannot claim to be united while disabled people still feel invisible

Britain cannot claim to be united while disabled people still feel invisible -

Visit Rwanda: How football is helping to tell of a remarkable journey from genocide towards prosperity

Visit Rwanda: How football is helping to tell of a remarkable journey from genocide towards prosperity -

Should the Church be beyond political scrutiny?

Should the Church be beyond political scrutiny? -

Why the future of feminism may no longer belong to the West

Why the future of feminism may no longer belong to the West -

What history can teach Trump about the Strait of Hormuz crisis

What history can teach Trump about the Strait of Hormuz crisis -

Should we be feeding our pets raw meaty bones?

Should we be feeding our pets raw meaty bones? -

Why Sweden is returning to printed books in the classroom

Why Sweden is returning to printed books in the classroom