The legal case against Britain’s new data regime

Dr Raj Joshi

- Published

- Opinion & Analysis

The Data (Use and Access) Act 2025 marks the most significant change to the UK’s data protection framework since the adoption of GDPR, raising profound questions about ministerial power, automated decision-making, financial privacy and the future limits of state surveillance. Supporters present the legislation as a modernising measure designed to reduce bureaucracy and support innovation, but critics warn that it weakens established rights, expands the state’s practical access to personal data and risks eroding public trust. Here, barrister Dr Raj Joshi argues that one of the defining questions for Britain’s digital future is whether this new regime can be squared with the privacy principles already laid down by domestic courts and the European Court of Human Rights

Surveillance has developed alongside state power for centuries, from spies and intercepted letters to wiretapping, CCTV and the bulk collection made possible by the digital age. Each technological advance has extended the state’s capacity to gather, store and analyse personal information, and the Data (Use and Access) Act 2025 forms part of that wider history.

The Act, which came into force last June, was trumpeted as a measure to cut red tape and support economic growth, reforming the rules on how data can be accessed, shared and used across the economy and public services.

But unlike most new laws, which take effect in full on a clearly defined date, this Act has been rolled out in stages through regulations made by ministers; some parts came into force last year, major changes to data protection and privacy followed in February this year, and further provisions are still due to take effect.

The result is that the state’s powers under the Act have expanded incrementally over time through ministerial action, widening its practical ability to access, analyse and use personal data, including the financial information of millions of welfare claimants.

As a result, civil society groups, legal experts and privacy advocates warn that this process is weakening long-standing protections in a systematic way. From a legal perspective, at least, I must agree. Domestic courts and the European Court of Human Rights have repeatedly drawn clear boundaries around state intrusion into private life, particularly where personal data is concerned.

The position is especially clear in relation to financial information. The European Court of Human Rights has repeatedly held that bank data falls within the core of private life. In M.N. and Others v San Marino, the Court stated that “information relating to a person’s bank account… is to be regarded as particularly sensitive”. In G.S.B. v Switzerland, it reaffirmed that “the transmission of the applicant’s bank data constituted an interference with his private life”. The Act nevertheless widens the circumstances in which public bodies, and potentially private contractors, can access and share financial data. Critics argue that this creates the conditions for routine, suspicionless monitoring of benefit claimants’ accounts.

One of the most controversial features of the Act is the broad power it gives the Secretary of State to amend core data protection rules through secondary legislation. These are commonly described as Henry VIII powers, a reference to the 1539 Statute of Proclamations, which allowed the Crown to legislate by proclamation. That approach sits uneasily with the requirement for legal clarity in any regime that interferes with privacy rights. In Liberty v United Kingdom, the European Court of Human Rights held that the UK’s interception regime “did not indicate with sufficient clarity the scope or manner of exercise of the discretion conferred on the authorities”. The Public Law Project therefore argues, with force, that powers of this breadth allow ministers to reshape privacy rights without meaningful parliamentary scrutiny.

The Act also changes the rules for decisions made entirely by computers in cases where the outcome can seriously affect someone’s life. The previous position placed tighter limits on solely automated decisions with legal or similarly significant effects. The new framework gives wider room for their use, with the emphasis shifting to safeguards after the event. The Open Rights Group argues that this flips the previous presumption against automated decision-making and makes it easier for AI and other automated systems to make important decisions in welfare, policing and immigration, even where the consequences for the individual may be severe and meaningful human scrutiny is limited. The courts have already identified the danger of weak safeguards in this area. In R (Bridges) v Chief Constable of South Wales Police, the Court of Appeal held that “the current legal framework is not sufficient to ensure appropriate safeguards”. The need for care is even greater in the welfare system, where rigid decision-making has already caused serious injustice. In R (Johnson & Others) v Secretary of State for Work and Pensions, the Court of Appeal condemned aspects of Universal Credit as producing “perverse” and “irrational” outcomes.

Elsewhere, the Act weakens the practical force of a person’s right to access their own data. The significance of that change is clear from R (Catt) v ACPO, in which the Supreme Court held that “the mere storing of information about an individual is an interference with private life”. Access to that information forms part of the means by which an individual can understand, test and challenge the state’s use of it. By requiring only “reasonable and proportionate” searches, the Act gives public bodies more room to limit what they look for and what they disclose. Civil society groups fear that this will make it easier for authorities to narrow legitimate requests, leaving individuals with less information about how they have been assessed or treated, making mistakes harder to detect and decisions harder to contest. In a system such as welfare, where errors can already produce irrational and damaging outcomes, that loss of visibility carries obvious and very dangerous risks.

The same pattern appears elsewhere in the Act. It broadens the definition of “scientific research” and expands data-sharing powers for public service delivery, prompting warnings from MPs and patient advocacy groups that private firms could gain wider access to sensitive NHS data despite government assurances that patient information is not for sale. It also extends biometric retention and cross-government data sharing, adding to fears of a more permanent surveillance infrastructure. In Big Brother Watch v United Kingdom, the Grand Chamber stressed that “end to end safeguards are essential to ensure the integrity of a bulk interception regime”. Critics argue that the direction of travel under this Act sits uneasily with that principle.

The consequences are not confined to privacy claims in the domestic courts, either. They also affect the rules governing the transfer of personal data from the EU to the UK. At present, the UK benefits from what is known as an “adequacy decision”. In simple terms, that is the EU’s formal recognition that Britain’s data protection standards remain high enough for personal data to be sent here without extra legal mechanisms being required for each transfer. It keeps everyday cross-border data flows relatively straightforward, whether the information concerns customers, employees, patients, suppliers or users of online services.

The UK first received adequacy in 2021, but only for a limited period ending in December 2025. When the government began reshaping domestic data law, including through the Data (Use and Access) Act 2025, the EU had to decide whether those changes still left the UK close enough to European standards to justify that status. The answer, for now, was yes; adequacy was renewed until 2031. But the renewal came with clear warnings. European regulators pointed to areas in which UK law could move further away from EU norms, including the breadth of ministerial powers to amend data rules and the more permissive approach to automated decision-making.

That conclusion carries practical consequences. So long as adequacy remains in place, businesses and public bodies can continue moving personal data from the EU to the UK in the ordinary course of trade and administration, without having to build a more complex legal structure around every transfer. If adequacy were revisited or withdrawn, that position would become far more difficult. Organisations would have to rely on alternative transfer tools, face greater compliance costs, and operate under much greater legal uncertainty. The argument about weakened safeguards is therefore not only a civil-liberties concern. It also carries commercial consequences for any organisation that depends on the routine movement of data between Britain and Europe.

Dr Raj Joshi is a senior barrister and prominent civil rights advocate whose career spans frontline legal practice, regulatory reform, and international justice. Twice named among the ‘Top 10 Asian Lawyers in the UK’ and listed in the ‘100 Most Influential Asians in the UK’, he has appeared before major inquiries, including giving evidence in the Stephen Lawrence case, and served as Chair of the Society of Black Lawyers. A former Adjudicator to the Solicitors Regulation Authority, Dr Joshi has advised ministers, helped shape legal protocols, and represented the UK in international legal forums.

READ MORE: ‘Can Trump drag Britain deeper into Iran’s war? International law says no‘. Trump wants Britain to toughen its stance, deepen its role and bow to U.S pressure over Iran. Barrister Raj Joshi examines international law – and why Starmer is right not to be bullied.

Do you have news to share or expertise to contribute? The European welcomes insights from business leaders and sector specialists. Get in touch with our editorial team to find out more.

Main Image: Alex Knight/Pexels

RECENT ARTICLES

-

Equality has a cost — and men will have to pay it

Equality has a cost — and men will have to pay it -

The hidden workplace inertia trap – and how leaders can overcome it

The hidden workplace inertia trap – and how leaders can overcome it -

To fix a broken America, it must turn away from empire

To fix a broken America, it must turn away from empire -

What Orbán’s fall means for Europe, the US and Russia

What Orbán’s fall means for Europe, the US and Russia -

Visibility is not power: What the film industry still withholds from women

Visibility is not power: What the film industry still withholds from women -

The dollar isn’t collapsing — but it is starting to matter less

The dollar isn’t collapsing — but it is starting to matter less -

When “We will raise it” becomes the problem

When “We will raise it” becomes the problem -

Solving Britain’s male misogyny crisis starts at home

Solving Britain’s male misogyny crisis starts at home -

Will it make the boat go faster?” How hotelier Kostas Sfaltos built a leadership philosophy around a single question

Will it make the boat go faster?” How hotelier Kostas Sfaltos built a leadership philosophy around a single question -

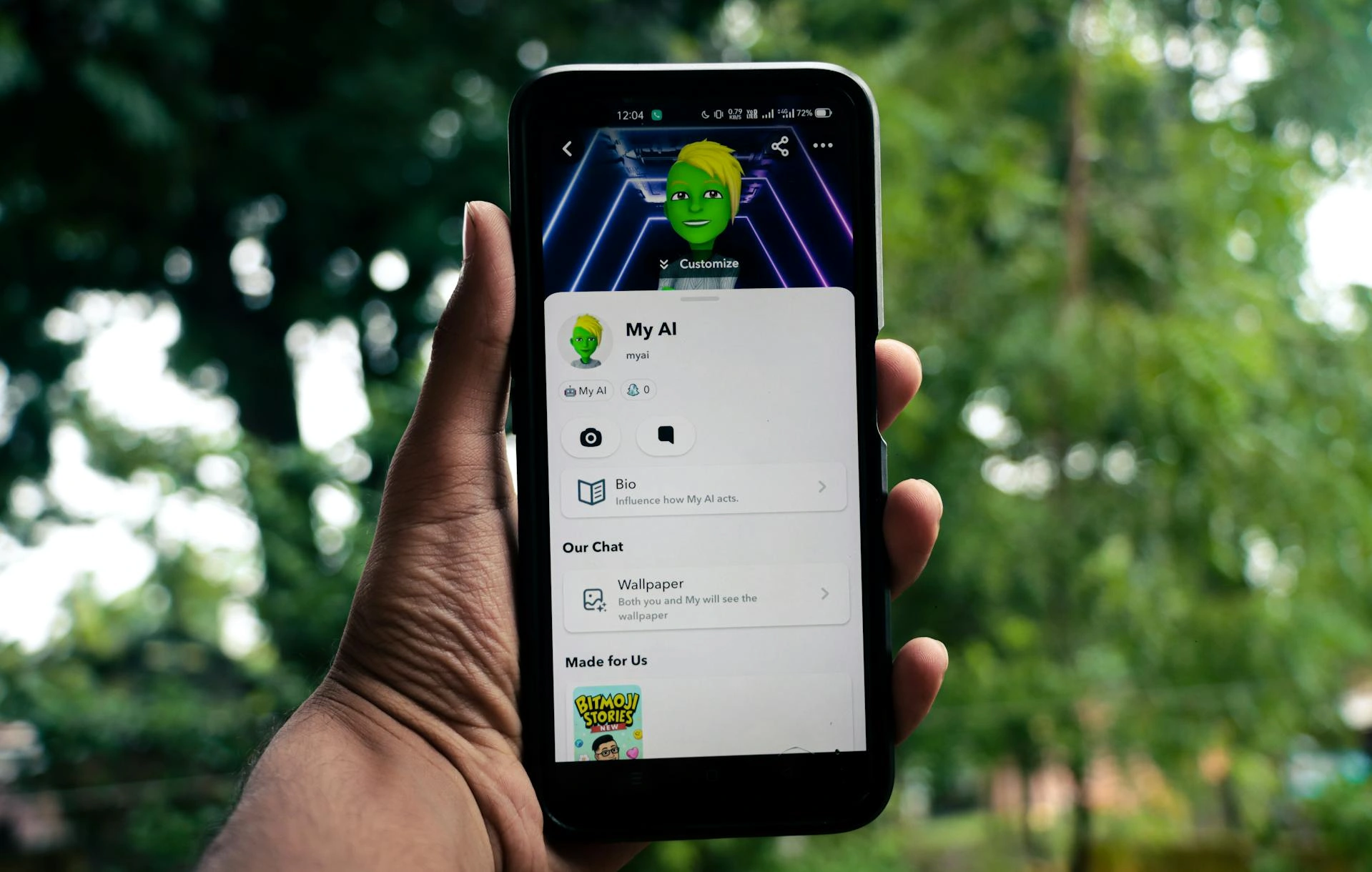

Starmer’s tough line on teen social media risks making a bad problem worse

Starmer’s tough line on teen social media risks making a bad problem worse -

Why these bleak, rain-lashed islands may matter more than we think to Arctic security

Why these bleak, rain-lashed islands may matter more than we think to Arctic security -

Why disabled people need peer support more than ever

Why disabled people need peer support more than ever -

The myth of gender-neutral tech

The myth of gender-neutral tech -

Can Trump drag Britain deeper into Iran’s war? International law says no

Can Trump drag Britain deeper into Iran’s war? International law says no -

Could AI be making social media feel more human than it is?

Could AI be making social media feel more human than it is? -

Your staff are using AI in secret – here’s how smart leaders should respond

Your staff are using AI in secret – here’s how smart leaders should respond -

Has Big Tech hijacked the AI summits?

Has Big Tech hijacked the AI summits? -

What Mexico’s giant data breach tells us about the new hacking age

What Mexico’s giant data breach tells us about the new hacking age -

France’s quest to secure UNESCO recognition for sea rescue

France’s quest to secure UNESCO recognition for sea rescue -

How the EU abandoned its cage ban promise

How the EU abandoned its cage ban promise -

What kind of masochist would want to run the BBC?

What kind of masochist would want to run the BBC? -

Workplace inclusivity must be all or nothing — otherwise it fails

Workplace inclusivity must be all or nothing — otherwise it fails -

Britannia no longer rules the waves

Britannia no longer rules the waves -

Britain must defend its streets as well as its borders

Britain must defend its streets as well as its borders -

Silicon Valley is finally being forced to answer for what it built

Silicon Valley is finally being forced to answer for what it built