People act more rationally when they think they are dealing with AI, study finds

Dr Stephen Whitehead

- Published

- News

Researchers found participants were more willing to accept an unfair cash split from an AI partner than from a human, suggesting people see artificial intelligence as more logical and less driven by emotion

People make more economically rational decisions when they believe they are interacting with artificial intelligence rather than another person, according to research.

In a new study, participants took part in an economic game involving real money and were asked whether to accept or reject an unfair offer from either an AI or a human partner.

The proposed split was heavily weighted against them: 90 cents for the partner and 10 cents for the participant from a total of one dollar. If the participant rejected the offer, both sides received nothing.

From a purely economic point of view, accepting the 10-cent offer is the rational choice because it leaves the participant better off than rejecting it and getting nothing.

The researchers found that participants who believed they were dealing with AI were significantly more likely to accept the unfair split than those who thought the offer had come from a human.

The study by UCD Michael Smurfit Graduate Business School suggests that people tend to see AI as more reason-driven and human decision-making as more influenced by emotion.

The researchers said this may mean people adjust their own behaviour to match what they believe is a more logical counterpart, rather than the effect revealing something unique about AI itself.

The findings could matter well beyond the lab as AI becomes more common in business, public policy and negotiations, where perceptions of how machines “think” may shape the choices people make in response.

The research was carried out by behavioural scientists Dr Suhas Vijayakumar, Dr Yuna Yang and Dr David DeFranza, who examined how “lay beliefs” – everyday assumptions about how something works – can influence behaviour directly.

Decision-makers should be aware that people may bring pre-existing assumptions about AI’s decision-making style into interactions, and that those beliefs may affect how readily they accept AI recommendations, they said.

Dr Vijayakumar added: “We speculate perhaps a reason why people are less likely to accept a similar unfair offer from a person (human), could also be because of expectations of reciprocity and emotional fairness that we share with other human beings. Future research needs to look at further expectations and beliefs about AI”.

READ MORE: These are the 10 AI trends to watch in 2026 that will drive business forward. So-called vertical AI, context engineering, and the use of metadata operating systems are helping companies make smarter, more efficient decisions

Do you have news to share or expertise to contribute? The European welcomes insights from business leaders and sector specialists. Get in touch with our editorial team to find out more.

Main image: Pixabay

TOP STORIES

-

Claude maker Anthropic valued at nearly $1tn after record AI funding round

Claude maker Anthropic valued at nearly $1tn after record AI funding round -

Felled Sycamore Gap tree ‘to speak again’ in UK national memorial

Felled Sycamore Gap tree ‘to speak again’ in UK national memorial -

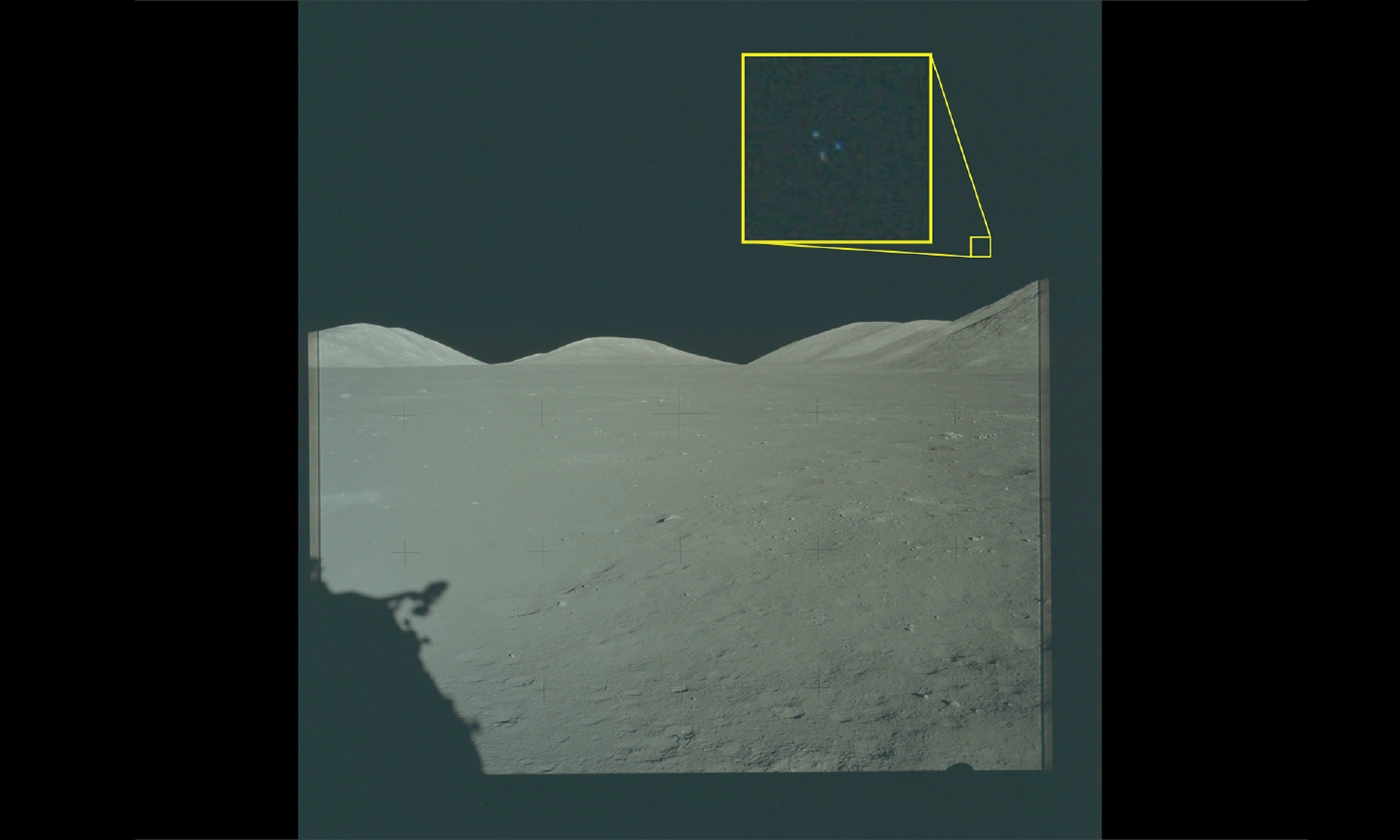

NASA to send rabbit-like drones to scout site for first Moon base

NASA to send rabbit-like drones to scout site for first Moon base -

Apollo, Artemis, Ali and Live Aid satellite station set for new Moon role in £37m deal

Apollo, Artemis, Ali and Live Aid satellite station set for new Moon role in £37m deal -

BrewDog founder pours free shares into new beer firm

BrewDog founder pours free shares into new beer firm -

Inside gaming billionaire Gabe Newell’s next-level gigayacht

Inside gaming billionaire Gabe Newell’s next-level gigayacht -

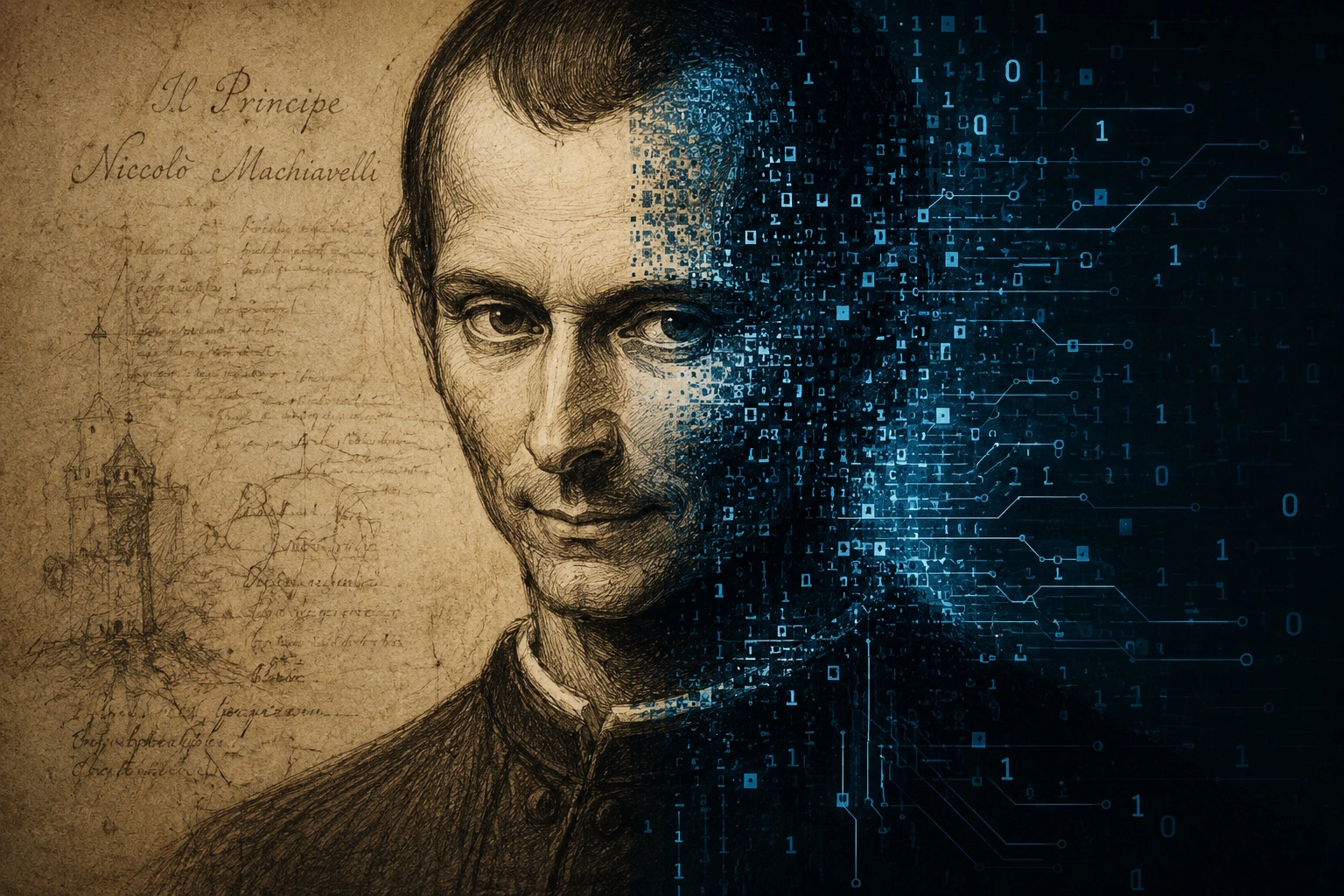

Machiavell-AI? Autonomous artificial intelligence systems ‘could become dangerously manipulative’, experts warn

Machiavell-AI? Autonomous artificial intelligence systems ‘could become dangerously manipulative’, experts warn -

Prague targets high-value business travellers after global congress ranking boost

Prague targets high-value business travellers after global congress ranking boost -

eBay rejects GameStop bid

eBay rejects GameStop bid -

AI EVERYTHING KENYA X GITEX KENYA summit launches in Nairobi as East Africa accelerates AI ambitions

AI EVERYTHING KENYA X GITEX KENYA summit launches in Nairobi as East Africa accelerates AI ambitions -

Xpeng eyes European factory as VW seeks to offload spare capacity

Xpeng eyes European factory as VW seeks to offload spare capacity -

This hidden Greek beach has just been named the best in Europe

This hidden Greek beach has just been named the best in Europe -

Siemens expands rail technology arm with Italian deal

Siemens expands rail technology arm with Italian deal -

New routes put Europe’s rail revival back on track

New routes put Europe’s rail revival back on track -

Parked electric cars could help power island ferries in German trial

Parked electric cars could help power island ferries in German trial -

UK billionaire count falls as wealthy quit Britain, Sunday Times Rich List shows

UK billionaire count falls as wealthy quit Britain, Sunday Times Rich List shows -

Macron unveils £20bn Africa push as France strikes new Kenya deals

Macron unveils £20bn Africa push as France strikes new Kenya deals -

Italy draws global tech investors as Europe races to build its own champions

Italy draws global tech investors as Europe races to build its own champions -

Opel turns to Chinese EV technology for new European-built SUV

Opel turns to Chinese EV technology for new European-built SUV -

Japan and Luxembourg deepen space ties as lunar race gathers pace

Japan and Luxembourg deepen space ties as lunar race gathers pace -

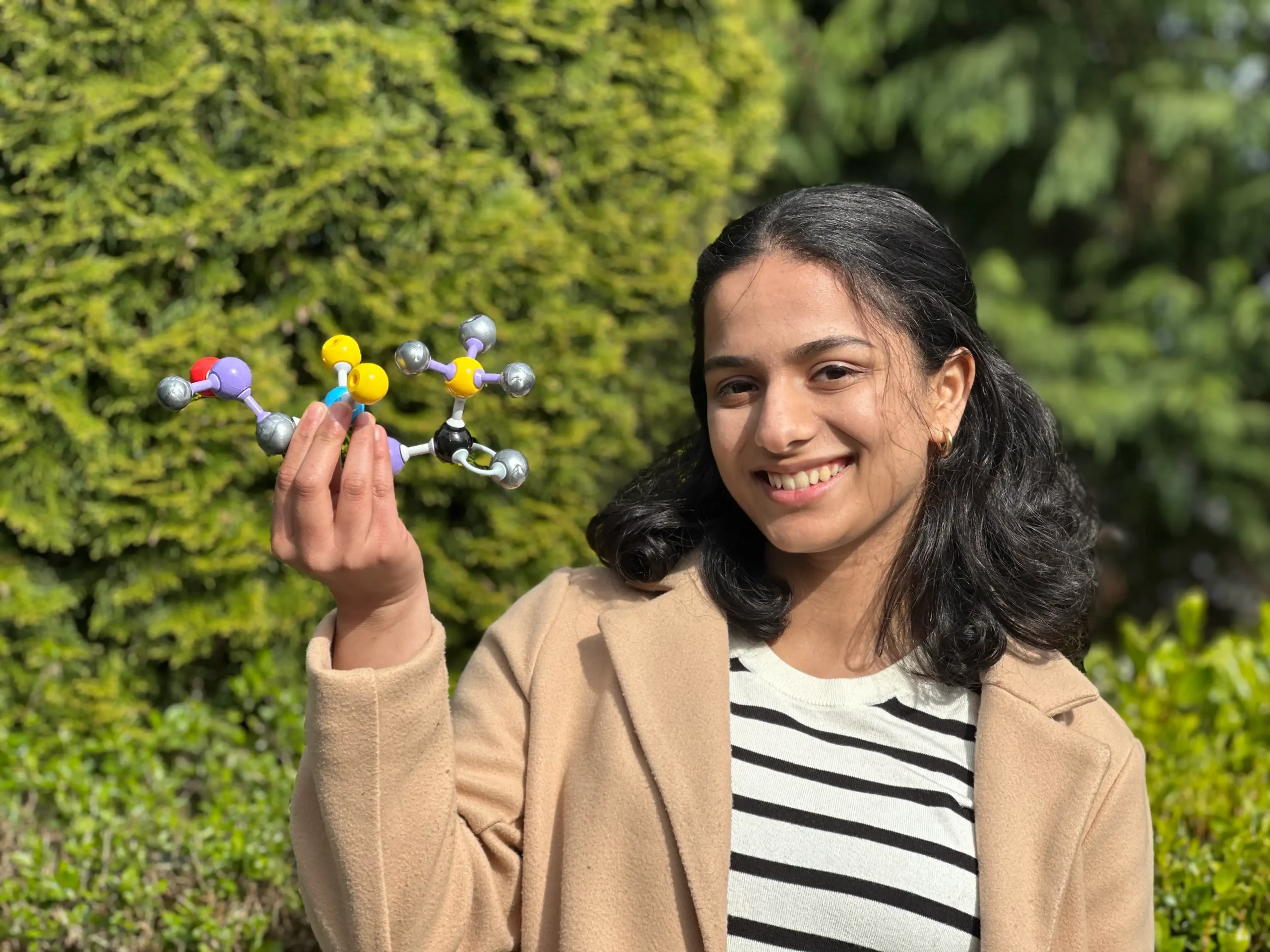

Meet the Earth Prize-winning teenager tackling the world’s microplastic crisis

Meet the Earth Prize-winning teenager tackling the world’s microplastic crisis -

Starmer fights for future as he moves to nationalise British Steel

Starmer fights for future as he moves to nationalise British Steel -

Bluebird returns to Coniston 59 years after Campbell’s fatal crash

Bluebird returns to Coniston 59 years after Campbell’s fatal crash -

Pentagon reopens Moon mystery in huge UFO files release

Pentagon reopens Moon mystery in huge UFO files release -

De Niro's Nobu heads to the country with first rural hotel in Rutland

De Niro's Nobu heads to the country with first rural hotel in Rutland