Transparency is critical to an AI-led future

John E. Kaye

- Published

- Home, Technology

For too long, and in too many spheres of public life, labyrinthine AI systems that apply algorithms to huge datasets have been the norm in automation and, except for data scientists, not everyone is able to understand how they work. The consensus building across Europe is that this mode of AI needs to be made more accessible and accountable – and rightly so. Promoting transparent, human-down AI is the way to make it happen.

Building a harmonised ethical approach to AI

As a member of the UK’s All Party Parliamentary Group on AI, I’ve taken part in numerous discussions on AI ethics – and much of it has been stimulated by new attempts at government regulation.

Many believe that Europe is leading the way in its approach to AI ethics and that, while China and the US may be racing ahead in terms of AI growth, we can collectively lay the foundation for a future led by trustworthy, accountable AI. This is important not only to our principles, but also to our industry: as seen in the “Charter on AI Ethics”, the EU Commission’s High Level Group on AI believes that the creation of consumer trust is the secret to closing the AI industrial gap with the US and China.

GDPR: A step in the right direction

The results of attempts at wide-ranging AI governance so far are a mixed bag, and understandably so: AI as a whole is difficult to define, let alone regulate.

But the General Data Protection Regulation (GDPR), while not yet the comprehensive legislation to put the explainability issue to rest (it guarantees the “right to be informed” about the existence of automation rather than the right to a rationale), is a sign of things to come.

With the EU seeking to improve accountability and data privacy, and with separate reports from the French, UK and German governments advocating similar values, businesses should prepare for regulation to soon catch up to the lightning pace of innovation. The best way to prepare, of course, is to be fitted out with auditable technology that will be suited to new transparency-based regulation.

Human-down technology delivers explainability

Regardless of whether legislation can currently enforce it, what the market needs is automated decision-making modelled on human expertise – rather than elusive data-driven matrixes. Keeping people at the centre of automated decision-making is the best way to match the productivity of AI-powered automation with the trust and understanding that both users and consumers deserve.

In fraud detection for example, it’s not news to say that automation has improved the efficiency and volume of fraud processing. But if a live agent can also explain why they think a case might be fraudulent, they have a much better chance of keeping the customer engaged and continuing to use the service.

When Rainbird worked with the fraud department of a major international bank, for example, we took the client’s best fraud expertise and built a model around it, able to replicate and scale a human understanding of nuanced cases. With the solution based on logic and data set by business experts, rather than on huge, hard-to-fathom algorithms and datasets, each decision made by the fraud engine is transparent, with rationale accessible via an in-built audit trail. No one is left in the dark about why a transaction has been flagged.

Business-friendly implementation

To build consumer understanding and trust in AI as the EU Commission intends, the businesses leveraging AI need to have a sure grasp of how their tools work. The nature of the implementation process here is essential: it needs to involve technical AI specialists collaborating with an organisation’s subject matter experts to create a solution for them that speaks to them in their language. At Rainbird we have an implementation team that workshops with clients to not only devise a tailored solution, but to teach them how to use Rainbird. Our technology is built for this purpose: it’s modelled with simple code and in an intuitive visual interface, so that it doesn’t take a data scientist to run – it can be understood and controlled by business people, who can in turn offer better explanations to their customers.

The correlation between the rise in human-governed AI with an increasing push towards transparency is no coincidence; the two are dependent on one another. This is because the biggest benefit to having an AI platform governed by human rules is that there are subject matter experts who can always provide that much-needed clarity about why and how decisions are being automated.

Bringing about explainable AI

Based on our own experience in the industry in recent years, the demand for explainable AI is increasing all the time. The auditable nature of our technology has proved integral to our recent projects, from improving transparency in trading with our automated inter-dealer broker, to shoring up compliance for accounting firms.

2018 was our biggest year yet: as well as adding to our client roster, we were selected to join the London Stock Exchange Group (LSEG) business support ecosystem ELITE, and now we’re in the middle of a US push to expand our net of global partners and clients and continue our growth.

Looking back to the business environment we were born into in 2013, it’s clear the industry has grown alongside us. Explainability is no longer the fringe issue it was then. It’s now of central importance to any company using or considering using AI. Forrester found 45% of AI decision makers say trusting the AI system is either challenging or very challenging, while 60% of 5,000 executives in an IBM Institute of Business survey expressed concern about being able to explain how AI is using data and making decisions.

I am further encouraged by the forecasts for 2019: they show that humans are increasingly being brought back to the forefront of AI implementation, with Forrester reporting that enterprises will add knowledge engineering to the mix to “build knowledge graphs from their expert employees and customers.” European policy and rhetoric is driving the constant improvement of AI ethics. The way to bring the rhetoric to life, as well as comply to new and upcoming regulation, will be to build explainable AI around human expertise.

Further information

rainbird.ai

TOP STORIES

-

Claude maker Anthropic valued at nearly $1tn after record AI funding round

Claude maker Anthropic valued at nearly $1tn after record AI funding round -

NASA to send rabbit-like drones to scout site for first Moon base

NASA to send rabbit-like drones to scout site for first Moon base -

Apollo, Artemis, Ali and Live Aid satellite station set for new Moon role in £37m deal

Apollo, Artemis, Ali and Live Aid satellite station set for new Moon role in £37m deal -

Four forces reshaping cyber risk in 2026

Four forces reshaping cyber risk in 2026 -

Siemens expands rail technology arm with Italian deal

Siemens expands rail technology arm with Italian deal -

Italy draws global tech investors as Europe races to build its own champions

Italy draws global tech investors as Europe races to build its own champions -

Opel turns to Chinese EV technology for new European-built SUV

Opel turns to Chinese EV technology for new European-built SUV -

Japan and Luxembourg deepen space ties as lunar race gathers pace

Japan and Luxembourg deepen space ties as lunar race gathers pace -

Polymorphic attacks: the shape-shifting threat

Polymorphic attacks: the shape-shifting threat -

‘Lost’ zip design could give space exploration a lift

‘Lost’ zip design could give space exploration a lift -

Orbitae - AI by SDG Group launches Gena Suite to scale enterprise AI

Orbitae - AI by SDG Group launches Gena Suite to scale enterprise AI -

Firms ‘wasting AI’ by using it to speed up bad habits

Firms ‘wasting AI’ by using it to speed up bad habits -

Why leadership matters when implementing AI

Why leadership matters when implementing AI -

Stratospheric telecoms blimp completes “historic” record 12-day flight over Atlantic

Stratospheric telecoms blimp completes “historic” record 12-day flight over Atlantic -

Mobile operators warn of higher bills and slower 5G rollout after energy support exclusion

Mobile operators warn of higher bills and slower 5G rollout after energy support exclusion -

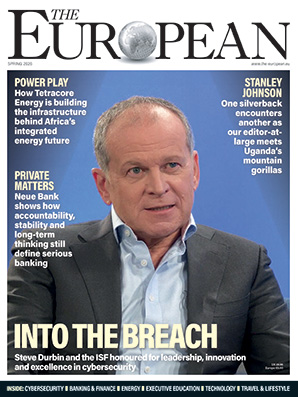

The 2026 European awards cement Steve Durbin and the ISF at the forefront of cybersecurity

The 2026 European awards cement Steve Durbin and the ISF at the forefront of cybersecurity -

These are the 10 AI trends to watch in 2026 that will drive business forward

These are the 10 AI trends to watch in 2026 that will drive business forward -

Europe launches ‘anti-kill switch’ cloud shield as Trump fears grip Brussels

Europe launches ‘anti-kill switch’ cloud shield as Trump fears grip Brussels -

Starmer summons social media chiefs to Downing Street over child safety

Starmer summons social media chiefs to Downing Street over child safety -

AIMi: bringing intelligence and speed to data migration

AIMi: bringing intelligence and speed to data migration -

GITEX Africa Morocco to host 1,450 exhibitors and startups as Marrakech event sharpens focus on AI and digital sovereignty

GITEX Africa Morocco to host 1,450 exhibitors and startups as Marrakech event sharpens focus on AI and digital sovereignty -

EXCLUSIVE: LA unveils Ghostbusters-style car to fight post-wildfire ‘toxic soup’

EXCLUSIVE: LA unveils Ghostbusters-style car to fight post-wildfire ‘toxic soup’ -

Social media giants hit with $6m verdict in landmark youth harm case

Social media giants hit with $6m verdict in landmark youth harm case -

Former Google executive launches €50m fund targeting Europe’s deep tech scale-up gap

Former Google executive launches €50m fund targeting Europe’s deep tech scale-up gap -

Airbus to acquire Ultra Cyber in UK defence cyber expansion

Airbus to acquire Ultra Cyber in UK defence cyber expansion